Online gaming platform Roblox is set to introduce age-based account systems to strengthen protections for younger users, as scrutiny over child safety in digital spaces continues to grow.

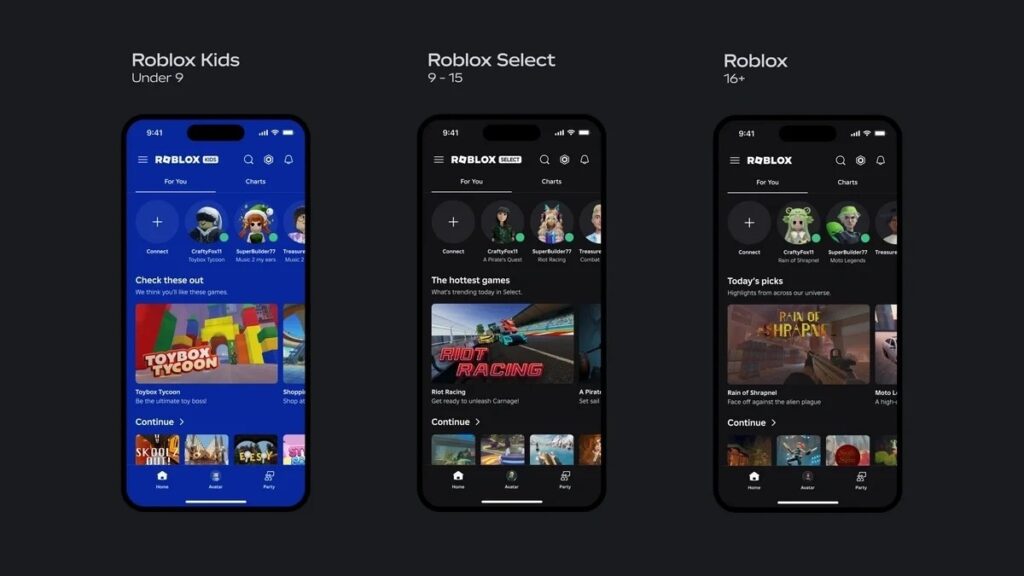

The company said it will roll out two new account categories, “Kids” and “Select,” in early June, tailoring access to content and communication features depending on a user’s age.

Children aged five to eight will automatically be assigned to Kids accounts, while users aged nine to 15 will fall under Select accounts. Kid accounts will only be able to access games rated for minimal or mild content, while chat features will be disabled by default unless activated by parents.

For older users under Select accounts, Roblox will gradually expand access to games rated up to moderate levels, with communication tools introduced in stages.

The update also consolidates existing safety measures such as parental controls, age verification, and content moderation into a more unified system.

RELATED STORY: What the PH government’s warning means for Roblox users

Developers will face stricter requirements, with experiences for younger users subject to additional review, including identity verification and tighter moderation standards.

The rollout comes as Roblox faces mounting pressure globally over alleged risks to minors.

In the Philippines, government agencies earlier raised the possibility of restricting the platform following reports linking it to cases of online child abuse and exploitation.

Authorities, including the Department of Information and Communications Technology (DICT) and the Cybercrime Investigation and Coordinating Center (CICC), instead opted against a ban after Roblox committed to strengthening safety measures on its platform.

The platform agreed to improve content monitoring, enhance reporting systems, and implement stricter controls to ensure children are only exposed to age-appropriate experiences.