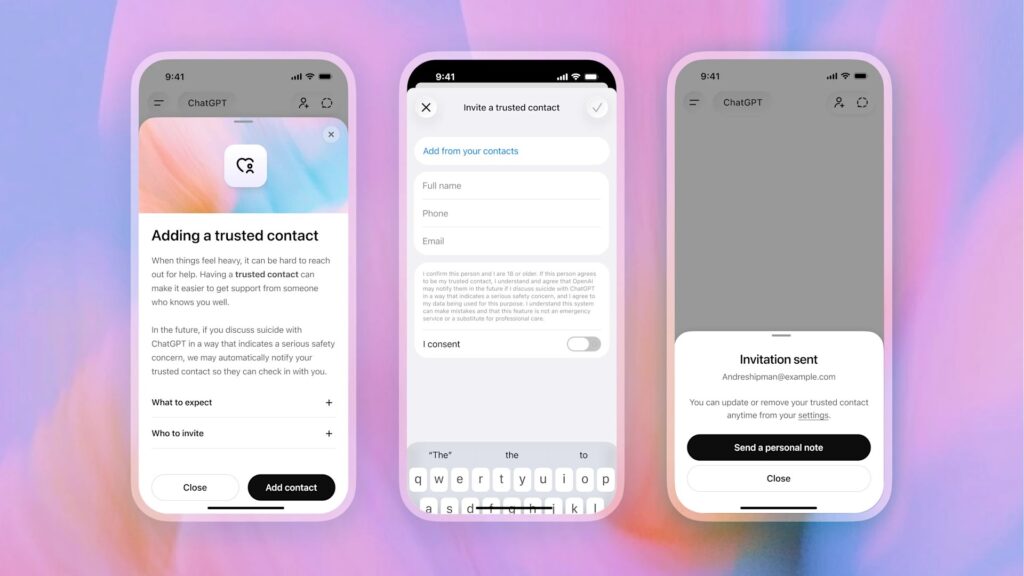

OpenAI has introduced an optional “trusted contact” feature in ChatGPT, allowing users to designate a person who may be notified if the platform detects signs of severe emotional distress or possible self-harm.

The feature comes as more users increasingly turn to AI chatbots like ChatGPT for emotional and mental health-related conversations.

According to OpenAI, users can choose a trusted contact, such as a family member or friend, who could receive an alert if the system determines there may be a serious risk to the user’s safety. The feature is optional and requires consent from both parties.

OpenAI said trusted contacts will not receive full chat histories or access to conversations. Users may also remove or change their trusted contact at any time.

The company said the system combines automated detection tools with human review before alerts are sent. It added that the feature is designed to encourage users to seek support from real people during moments of crisis.

While ChatGPT was not designed to replace licensed mental health professionals, users have increasingly used the tool to discuss personal concerns.

A 2025 study from Stanford University warned that AI therapy chatbots could reinforce stigma, mishandle crisis situations, and provide dangerous responses to vulnerable users.

Concerns around AI companionship platforms have intensified following reports involving emotionally vulnerable users and harmful chatbot interactions.

The feature is initially available to adult users on personal ChatGPT accounts in supported regions. OpenAI said the rollout will expand gradually.