Researchers have discovered a new tool that could corrupt image-generating AI models trained to use an artist’s work without consent, giving digital artists an upper hand in copyright infringement breaches.

‘Nightshade’ is a data-poisoning tool that artists can put into the pixels of their art that could manipulate AI generative models in how they interpret the image. This tool can corrupt prompts from Stable Diffusion SDXL, DALL-E, Imagen and Midjourney.

It is currently being developed in University of Chicago by a group of researchers led by professor Ben Zhao. The preview of the paper is uploaded on arvix.org.

“We propose the use of Nightshade and similar tools as a last defense for content creators against web scrapers that ignore opt-out/do-not-crawl directives, and discuss possible implications for model trainers and content creator,” The paper read.

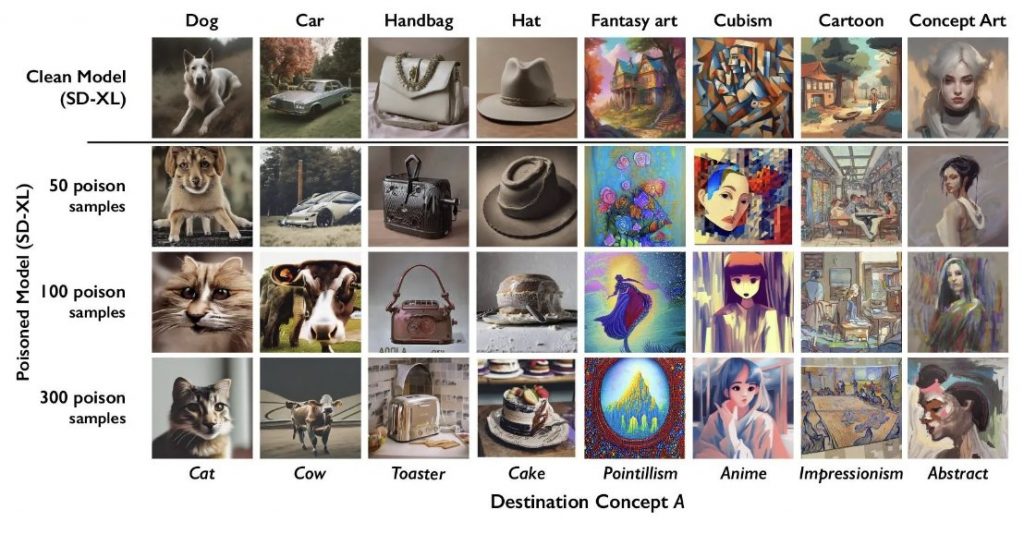

The research paper showed results of its early stages of the tool, where poisoned systems produce derailed images of cats, dogs, and cars. For one, the poisoned SDXL, when prompted with the word ‘hat,’ will generate an image of a cake after 300 poison samples later.

“Any stakeholder interested in protecting their IP, movie studios, game developers, independent artists, can all apply prompt-specific poisoning to their images, and (possibly) coordinate with other content owners on shared terms,” it wrote.

However, the research’s results show its ability to “perturb” an image’s features in text-to-image models has a limited impact on features taken by alignment models.

“This low transferability between the two models is likely because their two image feature extractors are trained for completely different tasks,” the researchers said.

“We note that it might be possible for model trainers to customize an alignment model to ensure high transferability with poison sample generation, thus making it more effective at detecting poison samples. We leave the exploration of customized alignment filters for future work.”

Furthermore, the researchers believe poison may have “potential value” in encouraging model trainers and content owners to move toward a negotiation of licensed procurement of training data in the future.

Over the past few years, text-to-image models have taken over the internet and brought upon serious copyright lawsuits against AI companies like OpenAI, Google, Meta, and more.

Meanwhile, In the Philippines, there is already a high alert within the Filipino multimedia sector against copyright infringement issues and labor displacement prompted by the rise of AI-generated work.